1. WHAT IS TECHNICAL COMMUNICATION?

1.4 Gen AI and Technical Writing

NOTE: AI technology is moving fast (and likely breaking things), so as I write this in spring 2026, I acknowledge that both AI technology and the research on the impacts of that technology will develop rapidly, and this chapter may soon require updating.

Generative AI may well become a legitimate part of professional writing practice; indeed, it already has in some quarters. Given that technical writing often follows well-defined genre conventions and patterns, Large Language Models (LLMs), which are very good at replicating patterns, may be an effective tool to help technical writers work efficiently in some contexts. However, the need for precision and accuracy in technical writing means that humans need to exercise extreme caution and diligent oversight when using AI generated content. This textbook does not offer instruction on “how to use Gen AI” to help you with your professional communication. There are three main reasons for this:

- As students and aspiring professionals, it’s crucial to develop the distinctly human competencies involved in professional communication practices, even if sometime in the future, you use Gen AI to help you do this work. Increasingly, research is showing that using AI at the early stages of developing these competencies can negatively impact your cognitive development, and over-reliance can result in “de-skilling” and cognitive decline.

- Content generated by AI is prone to biases, errors, and fabrications that require skilled human oversight (the kinds of skills you are meant to develop in a university writing course) to detect and correct.

- As an author and educator, I have ethical concerns about the social and environmental costs of using Gen AI as part of my professional teaching and writing practice. Many of my students have expressed similar concerns.

Since the free public release of Chat GPT and other commercial AI tools in 2022, many students have chosen to use Generative AI to help them complete assignments in school. At the university level, we are beginning to see serious problems resulting from students’ over-reliance on these tools, in terms of eroding high-level cognitive skills [1] such as reasoning, critical thinking, problem-solving, brainstorming, researching, collaborating, and communicating. The development of these cognitive skills is necessary for success in our information ecology.

Students’ inappropriate use of AI is also resulting in a dramatic increase in academic integrity violations [2] These violations happen for a variety of reasons, but often result from a poor understanding of what Gen AI is and what its limitations are.

Whether or not you intend to use Gen AI, it is important to develop Gen AI literacy. Therefore, this chapter provides information to help you critically consider the following questions:

- What is Gen AI? How does it work? What are its limitations?

- How could using Gen AI impact your learning?

- What are implications for Gen AI as part of professional practice?

- What are the larger social and environmental impacts of using Gen AI?

- How can Gen AI be used ethically and responsibly?

NOTE: You may choose NOT to use AI for a variety of reasons, and that is a perfectly valid choice. An increasing number of professionals and students are refusing to use AI. It is still important to understand how AI is being used and the impacts it is having, since your classmates and future colleagues may be using GenAI, and this might impact you in the classroom and workplace.

1. What is Generative AI?

Yuval Noah Harari, author of Sapiens and Nexus: A Brief History of Information Networks from the Stone Age to AI, defines AI in general as not just a “tool” we might use, but as an “agent,” something that can “learn and change by itself and come up with decisions and ideas that we can’t anticipate.” He argues that instead of thinking of this as “artificial” intelligence (suggesting that we have some control over it) we should think of it as “alien” intelligence, one that works very differently from our own and can make unilateral decisions that may have serious impacts on us.[3] Mackenzie et al. (2024), in their comprehensive Generative Artificial Intelligence (Gen AI) Overview, define GenAI more specifically as a type of artificial intelligence that can generate new content such as text, code, images, audio, and video by extrapolating from its training data.

Gen AI is a Large Language Model (LLM) that analyzes vast amounts of training data to learn the statistical relationships between words and phrases occurring together, and uses the training data to generate “natural language” responses to prompts that rely on “probability” – somewhat like how your cell phone offers predictive text as you type, based on the probability of what your next word is likely to be. Therefore, it’s not “thinking” or creating “meaning,” but rather generating probabilistic word chains to provide the most predictable and plausible-sounding response, based on the material in the training data. It can scan the training data very quickly, and confidently provide plausible-sounding results, but what it generates is simply based on the most commonly found combinations of words and phrases, not necessarily the most accurate information, making it necessary for you to review, fact-check, and likely revise the output.

Consider this quotation from the 2024 book, AI Snake Oil, that attempts to counteract some of the AI “hype” we are constantly exposed to.

“Philosopher Harry Frankfurt defined bullshit as speech that is intended to persuade without regard for the truth. In this sense, chatbots are bullshitters. They are trained to produce plausible text, not true statements. ChatGPT is shockingly good as sounding convincing on any conceivable topic. But there is no source of truth during training. Even if AI developers were to somehow accomplish the exceedingly implausible task of filtering the dataset to only contain true statements, it wouldn’t matter. The model cannot memorize all those facts; it can only learn the patterns and remix them when generating text. So, many of the statements it generates would be false.”

(Narayanan & Kapoor, AI Snake Oil, 2024).

Recent research on AI has highlighted several serious concerns discussed below.

Language Homogenization

There is significant worry that widespread use of LLMs will result in a homogenization of language and thought[4] based on the probabilistic model it uses. Increasingly, the training data used by LLMs will include content generated by LLMs, creating a vicious cycle of language homogenization where AI is simply regurgitating new versions of its own previously-generated content. A key goal in honing your communications skills is to develop your own “voice” and writing processes. While using AI may help you “sound more professional”, it also robs you of the opportunity to develop your own voice and style, as well as a sense of how to adapt it for different audiences, and can have the effect of silencing student voices.[5]

Privacy Issues

LLMs like Chat GPT are trained on a wide range of sources including books, reports, datasets, code, but increasingly, much of the training data is coming from websites and social media, especially sites like Reddit, which signed a lucrative contract[6] to allow its content to be used as training material. Many of the these “datasets” may not be considered sources of accurate or credible information, but they do provide examples of “natural language patterns” for AI to emulate. For a while, OpenAI was even using prompts and interactions created by users of ChatGPT as training material, resulting in private chats showing up in Google searches![7] Many users were outraged at the public release of their private chats. Data privacy is a major concern when using commercial AI tools. They may be “free to use” but these companies are collecting copious amounts of personal data from users! And it’s been said that “data is the new oil.” Also consider the possibility that every time you use AI, you are training it, possibly to take the job you hope to get when you finish university!

Bias

The content generated in response to a user’s prompt is based on datasets that may not always present accurate or reliable information. They can also replicate and even amplify biases inherent in the training data the AI is learning from. Data that contains content that discriminates against or marginalizes underrepresented, minority, and equity-deserving groups may appear and be amplified in AI generated outputs. As a result, outputs may be racist, sexist, ageist, ableist, homophobic, transphobic, antisemitic, Islamophobic, xenophobic, deceitful, derogatory, culturally insensitive, and/or hostile. While companies like Open AI have made attempts to address this, the risks persist. For a visually illustrated example, watch this YouTube video on “How AI Image Generators Make Bias Worse.”

Errors, Inaccuracies, and “Hallucinations”

Probably the most concerning issue for you as students, and for professionals in any workplace, is the fact that content generated by LLMs can be highly unreliable, even though it appears plausible and professional. A lay person might not immediately spot any problems in the content it generates, but an expert in the field would quickly notice information that contained errors, inaccuracies, and outright fabrications. Below are some recent examples that provide cautionary tales for why we must use Gen AI with extreme caution and exercise diligent human oversight:

“Deloitte’s AI Fallout Explained: The $440,000 Report That Backfired”[8] explains how the consulting company, Deloitte, had to refund money to the Australian government after a report they created for them (using ChatGPT) was found to contain “fabricated academic citations, false references, and a quotation wrongly attributed to a Federal Court judgment.”

AI Hallucination Cases[9] in legal court cases where lawyers were found to have include AI hallucinated content (fake case studies, citations and other arguments) in the documents they submitted to the court. Between Feb 2024 and Feb 2026, this databased tracked 54 cases in Canada alone! And 754 worldwide.

An academic article proposing a method for using AI to diagnose Autism[10] published in Nature in Nov 2025 was retracted in December 2025 after many readers noticed that it contained a nonsensical AI-generated infographic purporting to illustrate the methodology.

The professionals writing the report for Deloitte and the lawyers trying to build cases to defend their clients were (hopefully?) not aware of the potential for AI to hallucinate in this way – and it likely cost them in terms of professional reputation and financial penalties. Nature magazine’s reputation for publishing high quality academic research suffered a blow for allowing AI generated nonsense to be published on its site.

This is why it is important for you to develop AI literacy – an understanding of how Gen AI works and what its limitations are – so you don’t make the kinds of mistakes they did. Making those kinds of errors and including fabricated data in an academic context is a violations your institution’s Academic Integrity Policy. Making them in the workplace can have dire legal and financial implications!

Here are some resources to help you understand why LLMs can be unreliable, and how to spot unreliable output:

- This YouTube video, “Why Large Language Models Hallucinate,” provides a clear explanation for why Chat GPT and others generate hallucinations, factual errors, outdated information, and sometimes just plain nonsense.

- “How to Spot AI Hallucinations like a Reference Librarian”[11] is essential reading if you plan to use AI to help you conduct research and synthesize source material provided by AI into your argument.

- Given the fact that AI enables the creation of misinformation, disinformation and malinformation (MDM) such as fake news, deep fakes and so on, many organizations have developed resources to help people vet the credibility of information. Here is information from Camosun College on how to Identify Misinformation, Disinformation, and Maliniformation.

2. How Does Using Gen AI Impact Learning?

Early in childhood, our brains produce an over-abundance of neurons and synapses to allow us to prepare for, adapt to, and thrive in a variety of environments. During childhood and adolescence, and even into our late twenties, a process of “synaptic pruning” takes place, where our brains reduce the excess neurons and synaptic connections based on a “use it or lose it” principle. The brain removes weak or unused synapses to focus on strengthening the ones that we use more frequently. Thus, turning to AI to deal with challenging and difficult tasks means missing out on the opportunity to strengthen important skills, and you may risk losing crucial cognitive abilities. See What You Need to Know about Brain Pruning and AI for more information.

Writing courses tend to focus on helping students develop The 4 Cs of 21st Century Learning Skills (communication, collaboration, critical thinking and creativity), and “habits of mind” as part of the process for communicating effectively and problem solving. One of the key habits of mind is “persistence” – the ability to keep trying, persevering even though something is difficult, confusing or even frustrating. This challenging phase — when you feel the most frustration — is when learning is actually happening! Using Gen AI to offload the cognitive labour of brainstorming, researching, planning, drafting, and revising circumvents the development of the very cognitive skills that courses like this are meant to help you develop.

Imagine being in an important meeting with colleagues, discussing an emergent issue; the chair of the meeting asks everyone to engage in a brainstorming session to start working on ways to address the problem. If you can’t do this without AI, you won’t be much use at this meeting! I have heard executives say that they would not trust someone who relies on Gen AI to communicate face-to-face with clients. Using AI to do the work for you is like skipping the “brain training” that helps you develop higher order thinking skills. It’s like skipping the cardio part of your fitness training; AI can’t do your cardio for you!

What does the research say? Recent studies examine how AI impacts learning

Klimova and Pikhart[12] reviewed 24 studies done between 2019-2024 on the impact of AI on learning. From this research, they concluded that “while AI offers benefits such as personalized learning, mental health support, and improved communication efficiency, it also raises concerns regarding digital fatigue, loneliness, technostress, and reduced face-to-face interactions. Over-reliance on AI may diminish interpersonal skills and emotional intelligence, leading to social isolation and anxiety.” The research also raises serious concerns about over-reliance and dependence on AI leading to diminished creativity, critical thinking, collaboration, and problem-solving skills.

A 2025 study,Your Brain on ChatGPT,[13] used electroencephalography (EEG) to record participants’ brain activity to assess their cognitive engagement, cognitive load, and neural activations while engaging in an essay writing task. They compared the levels of neural connectivity in three groups of students asked to write SAT style essays: one using only their brain, one group could use Google search to look up relevant information, and one group used ChatGPT. The EEG readings showed that the “brain only” students had strongest and most distributed neural networks, while those who used AI had weakest neural connectivity. In post writing tasks, those who use AI assistance showed poorer memory recall; they had a lower ability to quote from the essay they had just written minutes earlier. In follow up sessions, the group using AI “performed worse than their counterparts in the Brain-only group at all levels: neural, linguistic, scoring” (Kosmyna & Hauptmann, 2025).

Budzyn et al., in their 2025 Lancet article “Endoscopist deskilling risk after exposure to artificial intelligence in colonoscopy,”[14] found that using AI based imaging for reading endoscopy results lead to potential “deskilling” of physicians in their ability to identify lesions without AI assistance.

AI and Writing Skills

Writing skills are developed through deliberate practice. Just as workouts build your actual muscles, writing builds your cognitive muscles. And writing is hard work! It’s a fantastic workout for your brain! If you don’t use the muscles, they atrophy. When I was young, I had dozens of phone numbers memorized for my friends, family, and workplace. Now that I have a cell phone that does this for me, I don’t even remember the phone numbers of my own children! I have lost this cognitive skill, not due to age, but to disuse (synaptic pruning).

As with anything related to algorithms, the saying “garbage in, garbage out” applies. To elicit useful output from Gen AI, you have to be able to write clear, concise, concrete, and coherent prompts that include information about purpose, audience, context, and genre. If you don’t have a clear understanding of the task, audience, and rhetorical situation to begin with, or don’t have the requisite writing skills to design effective prompts, you won’t be able to construct prompts that will generate useful content. Even if you do, you will need the knowledge and critical thinking skills to review, evaluate, and revise the generated output to ensure that the content

- Is accurate, reliable, and unbiased (see Goldin’s “How to Spot AI Hallucinations like a Reference Librarian”)

- Meets the stated and implicit requirements of the task

- Follows the genre conventions and expectations

- Uses a suitable tone, style, and vocabulary for your intended audience.

If you plan to AI, this is the “due diligence” required so that you can actively build your skills and knowledge and accurately demonstrate your learning in the course. This kind of vigilance may well be more work than simply writing the work yourself without AI.

3. Gen AI and Professional Practice

Many workplaces might require you to have proficient AI literacy, but also require you to have distinctly human competencies (the 4 Cs mentioned previously). Therefore, you cannot develop one at the expense of the other. But also consider that many organizations are developing policies to protect themselves from problematic AI use, and some organizations prohibit the use of Gen AI altogether. TFL maintains a “running list of key AI lawsuits” tracking all cases involving intellectual property and copyright violations. The sheer number of ongoing cases demonstrates the need for more robust regulations. Gen AI may nor may not live up to the hype currently being generated by the companies building and promoting it, and people are increasingly calling for regulations and even bans.

Many people have written about the need for human oversight when using GenAI tools, and diligence in checking AI generated content for accuracy and reliability. The infamous Deloitte scandal and the database of legal cases mentioned earlier are only a couple cautionary examples.

What do Professionals say about using AI Professionally?

Josh Anderson,[15] a senior software engineer, describes his experience of going “all in” on using AI to build code, only to discover that the MIT Study[16] – claiming that 95% of corporate AI initiatives fail – was right! He used AI to generate a complex code, launched the product much earlier than expected, and everything seemed great, until he needed to make a small change in the code and realized he “wasn’t confident he could do it”:

“Twenty-five years of software engineering experience, and I’d managed to degrade my skills to the point where I felt helpless looking at code, I’d directed an AI to write. I’d become a passenger in my own product development.”

Anderson warns that 100% adoption of AI may look successful and efficient at first, but months later, you realize that no one fully understand what the AI built, how it built it, or how to fix or modify it; they can’t debug code they didn’t write, can’t explain decisions they didn’t make, and can’t defend or refine strategies they didn’t develop.

Ethan Mollick[17] performed experiments in workplaces to see how AI impacted efficiency and quality of work. His findings were similar to Anderson’s. At first, AI seemed to improve efficiency and quality, and even “levelled up” some employees’ skills. However, he found that over time, over-reliance on AI made people “careless and less skilled in their own judgment.” When workers let AI take over instead of using it as a tool, it negatively impacts human learning, skill development and productivity. Mollick defined two effective approaches to using AI:

Centaur Approach: using the half human/half horse creature of Greek mythology, he asserts that humans should be the “head” of the centaur, making the strategic decisions to determine what “leg work” the AI should do.

Cyborg Approach: this approach is more collaborative, where humans work in tandem with AI. He recommends this approach for writing tasks, and asserts that when this model is used effectively, the results are better than what either the human or the AI could achieve alone.

He warns against “going on autopilot” when using AI and “falling asleep at the wheel.” This is when people fail to notice the mistakes that AI inevitably makes.

Cory Doctorow[18] warns about the “reverse centaur” approach, where AI is in charge, telling the humans what to do, and humans are scrambling to detect and fix all the errors that AI can make at superhuman speed. The fact that AI is prone to hallucinating makes it a very bad “head” of the centaur: “the one thing AI is unarguably very good at is producing bullshit at scale.” He acknowledges that the centaur model could offer many benefits to workers, but warns that the path to profitability presented by most companies lies in the reverse centaur model, which will be brutal for workers (think Amazon packing warehouse!).

Michael Alley, offers some Strong examples of AI Writing in Engineering and Science,[19] but you’ll note when reading that in each case, the humans involved needed to have the expertise and skill to fact check and revise the AI output to make it suitable for professional purposes and audiences.

Consider that every time you are using a commercial AI product, you are contributing to its training, and potentially teaching it to do the job you hope to have someday! At the same time, learning how to use it to collaboratively create content that you are ultimately in control of and responsible for will likely be a useful skill in some workplace contexts.

4. Costs of Gen AI

Wait, isn’t ChatGPT free?

Just because you are able to use GenAI for free does not meant that there are no costs. Indeed, you have to wonder why you are being inundated with advertisements that encourage you to use a product that you can access and use for free — for now, at least.

Many people are becoming increasingly concerned about the costs involved in creating the infrastructure and training necessary for AI to operate, as well as the current and potential costs that using these systems have on society and the environment. Some even see AI as a potential existential threat for humanity! The sections below provide some resources that discuss the current and potential costs of AI that we need to consider if we are going to use the technology for tasks like helping us with a writing assignment.

Environmental Costs

Data centres require enormous amounts of energy and water to run and cool the massive servers needed to process all the data and information we request from AI. No one really knows how much energy and water, because the AI companies tend to not want to disclose accurate information.

Christopher Pollon argues that Big Tech Is Hiding the Environmental Cost of Chatbots[20] making it difficult to manage resources or measure and plan for the environmental impacts. Pollon cites a report calculating that 30% of the electricity used by data centres worldwide comes from coal powered plants. In the U.S. and China, the largest AI data centre markets by far, “most of the electricity consumed by data centres is produced from fossil fuels.”

Kate Crawford’s 2024 article[21] focuses on the massive water requirements of AI data centres. Estimates of their water usage have been made by scientists, relying on lab-based studies combined with the limited information these companies actually report. AI companies are not legally required to disclose this information, and there is no incentive for them to do so.

For some additional context, read AI’s Challenging Waters[22] (Privette, 2024) and watch this YouTube video, A ‘Thirsty’ AI Boom Could Deepen Big Tech’s Water Crisis (CNBC International, Dec. 2023).

Social Costs

These environmental costs inevitably lead to social costs. Pollon (2025) describes one case where the environmental issues impacted a community:

“Elon Musk’s xAI shined a spotlight on the Wild West of backup data-centre power systems about a year ago, when it established dozens of portable methane gas generators at a big data centre in Memphis. Up to thirty-five generators were on site without a permit—until members of a poor downwind Black community rose up in response to the emissions.”

Crawford (2024) reported that “in West Des Moines, Iowa, a giant data-centre cluster serves OpenAI’s most advanced model, GPT-4. A lawsuit by local residents revealed that in July 2022, the month before OpenAI finished training the model, the cluster used about 6% of the district’s water.” That was just the training phase. Once fully operational, the “inferencing” stage may require substantially more resources.

The International Economic Development Council (IEDC) in March 2025, published a literature review examining how AI will impact labour markets.[23] They predict the jobs most likely to be lost and gained in the coming years, and discuss the pros and cons of integrating AI into the workplace. While AI might improve efficiency, job quality and innovation, it also will lead to job displacement, deskilling, inequality, and have a disproportionate effect on vulnerable groups.

We can already see serious labour issues in the current work required to train AI models.

Billy Perrigo’s 2023 Time Magazine article drew attention to labour exploitation in Kenya[24] where OpenAI hired workers for $2/hour to sift through the training material to identify and remove toxic language and images to make ChatGPT “safe” for users.

Daxia Rojas, in a 2025 Bloomberg article, explains other instances of the “Gruelling low paid human work behind generative AI curtain.”[25] As long as Generative AI models are based on automated learning, they rely on sub-contracting millions of human beings to verify and label the data that trains them. This can be anything from helping self-driving cars learn distinguish between images of trees and pedestrians, to reviewing autopsy reports, to removing violent or obscene content from social media. Because the industry has no significant regulation, data labellers tend to be young, work long hours for very low pay, and have precarious work conditions.

Lawsuits have been brought against companies claiming that workers are exposed to traumatizing content without adequate safeguards. For example, one worker claimed they were “required to converse with an AI chatbot about topics such as ‘How to commit suicide?’, ‘How to poison a person?’ or ‘How to murder someone?’” Others are required to examine and tag pictures of dead bodies, sexually abusive and violent images and videos, and other traumatizing content for hours on end.

There is a worrisome tendency to “anthropomorphize” AI agents, that is, to attribute human characteristics, motivations, and emotions (such as empathy) to chatbots. Even though they are designed to seem “human-like,” AI agents do not think, reason, or feel emotions as humans do. Current designs are designed to please, or even flatter the user, not interact in truly meaningful ways. While anthropomorphism can make technology feel more engaging and user-friendly, it can result in people trusting unreliable information and lead to unhealthy social relationships.

The Asilomar AI Principles suggest guiding principles that should be put in place to ensure that AI is intentionally developed in a way that will be beneficial and not simply an “undirected intelligence” motivated purely by profit.

Existential Threats

The rapid speed at which AI is being developed and released led over 100 leaders in AI technology to write an open letter in March 2023 urging a global pause on AI training of systems more powerful that GPT-4, or a government imposed moratorium. The purpose of this pause would be to temporarily halt the “arms race” of AI development in order to create a set of shared protocols, regulations, governance structures and oversight bodies for advanced AI development that would protect humanity from potential harm that we cannot even predict at this point, let alone control.

“As stated in the widely-endorsed Asilomar AI Principles, Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources. Unfortunately, this level of planning and management is not happening, even though recent months have seen AI labs locked in a out-of-control race to develop ever more powerful digital minds that no one — not even their creators — can understand, predict, or reliably control.”

Excerpt from Pause Giant AI Experiments: An Open Letter

Yuval Noah Harari, in his speech at Davos (linked below) warns us about the consequences of AI taking over all aspects of society that is made up of words (legal systems, religious systems, etc) and especially of the dangers that might arise from AI agents being granted legal rights as persons who can own property, open bank accounts, run corporations, and contribute to political campaigns. You can watch his speech here:

5. Ethical Approaches to Gen AI

Deciding whether or not to use Gen AI to help you with your assignments means undertaking a highly complex “cost/benefit analysis” that will, at least in part, be based on very personal ethical choices. If you do choose to use it, here are some guidelines to follow to help you use it responsibly and ethically.

Guidelines for Responsible and Ethical Use of Gen AI

- Review your institution’s policy on AI use; you might find this embedded in the Academic Integrity Policy in a university, or it might be a separate policy. This will apply to all members of the institution.

- Review the Syllabus or Course Outline for course-based policies on AI use that may be more specific than the institutional polices (departments within organizations may have different expectations and regulations). If there is no policy in the syllabus, ask your instructor for guidance on what their expectations are around use of AI tools.

- Carefully read the assignment instructions to see if there is any specific guidance on use of AI tools. Be sure to abide by the expectations provided. If there are none, again, ask your instructor or supervisor for guidance before using AI.

- Attend workshops and seek instruction on how to use Gen AI effectively and ethically. For example, your library may offer workshops on Prompt Design and Using AI for Research.

- Do your “due diligence” by reviewing any AI generated content for errors, inaccuracies, biases, hallucinations and any other form of “confidently presented bullshit.”. You are responsible for fact checking, evaluating, and revising the content to meet the needs of your task and audience. You are responsible for the work you submit; this means that submitting work that that contains errors and fabricated data — even if these were generated by AI — will lead to consequences for you.

- Be sure to cite and document how you have used AI in the creation of your assignment. You may be asked to include a “Use of AI Disclosure” statement appended to your work, so be prepared to include relevant information about which AI tools you used, how you used them, and how you adapted the AI output. Keep in mind that AI generated content cannot be considered your work, and you cannot ethically submit it as your work. You must cite it appropriately, using the citational practices required.

- Never feed someone else’s work (their intellectual property) into an AI prompt without their explicit permission. Some people do not want their intellectual property given away to commercial AI companies to use as free training data.

If you would like more guidance on how you might ethically and effectively use Gen AI as part of your professional writing practice, I suggest Potter and Hylton’s Generative AI in Content Creation[26] (an adaptation of this textbook that includes instruction on how to use Gen AI as part of your writing process), as well as resources and workshops offered by your university’s library.

Exercises and Activities

- AI Use Cases: Form a group and discuss whether and how you have used Gen AI tools in the past to help you with various tasks. What AI tools have you used and how? For example, brainstorming, doing background research, planning/outlining, drafting content, revising content, getting feedback on your content, editing content, finding and integrating research sources, citing sources, creating graphics or data visualizations, other uses? Or do you refuse to use AI? Discuss amongst yourselves if and how you have used AI in these or other ways, and how effective it was, what kind of additional “human” work you had to do, and what you learned from the process.

- Use of AI Policy: If you are working on a team project, develop a detailed “Use of AI Policy” that all team members agree to abide by while working on the project. Make sure your policy is consistent with your course and university policies.

- Cost/Benefit Analysis: Conduct an informal cost/benefit analysis to determine whether the potential benefits of using Gen AI outweigh the known (and unknown?) costs.

- Learning Goals: Identify 3 key learning goals that you have related to developing professional communication skills. How might using Gen AI tools either support or circumvent your achievement of those goals?

- SWOT Analysis: Based on what you now know about Gen AI, conduct a SWOT Analysis to determine the Strengths, Weaknesses, Opportunities and Threats involved in using Gen AI as part of your writing process and work flow.

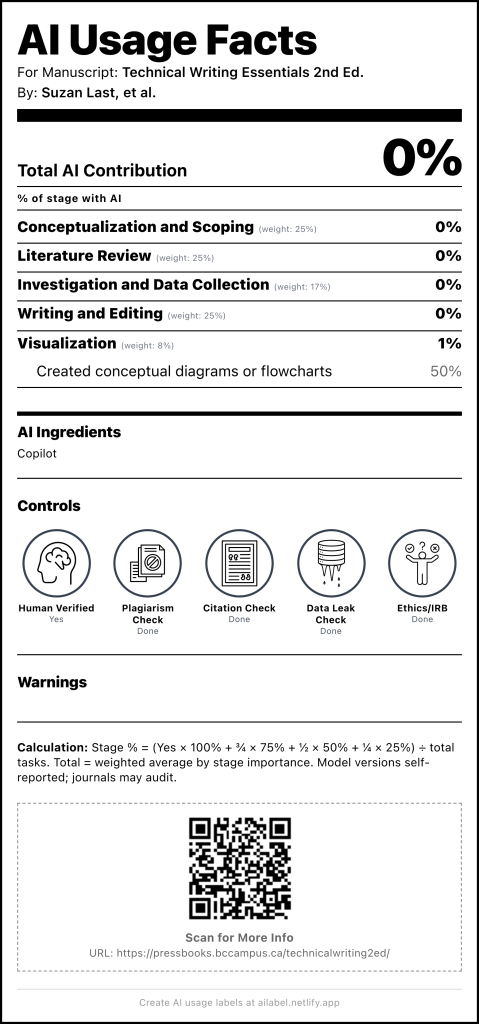

- AI Usage Label: use this AI Usage Label generator to create a label (like a nutritional label on a food product) to indicate where and how you have used AI in a specific document.

- N. Kosmyna et al., “Your Brain on ChatGPT: Accumulation of Cognitive Debt when using an AI Assistant for Essay Writing Tasks." arXiv:2506.08872v2, Dec. 2025. ↵

- C. Flaherty, "AI and Threats to Academic Integrity: What to Do." Inside Higher Ed. 20 May, 2025. ↵

- Yuval Noah Harari: Why advanced societies fall for mass delusion, Big Think, Jan 2026 ↵

- K. Chayka, "A.I. is Homogenizing our Thoughts: Recent studies suggest that tools such as ChatGPT make our brains less active and our writing less original." The New Yorker, 25 June 2025. ↵

- J. Kaiser and T.J. Richmond, "ChatGPT and the Homogenization of Language: How the Adoption of AI Silences Student Voices." Academic Senate for California Community Colleges, Nov 2024. ↵

- J. Jones, “Why Reddit is frequently cited by Large Language Models,” Perrill (online), 23 Sept. 2025. Available: https://www.perrill.com/why-is-reddit-cited-in-llms/ ↵

- A. Belanger, “ChatGPT users shocked to learn their chats were in Google search results,” Ars Technica, 1 Aug. 2025. Available: https://arstechnica.com/tech-policy/2025/08/chatgpt-users-shocked-to-learn-their-chats-were-in-google-search-results/ ↵

- A. Chaturvedi, “Deloitte’s AI fallout explained: The $440,000 report that backfired.” NDTV World, 8 Oct. 2025. Available: https://www.ndtv.com/world-news/deloittes-ai-fallout-explained-the-440-000-report-that-backfired-9417098 ↵

- D. Charlotin, AI Hallucination Cases (online database). Available: https://www.damiencharlotin.com/hallucinations/ ↵

- S. Jiang, RETRACTED ARTICLE “Bridging the gap: Explainable AI for autism diagnosis and parental support with TabPFNMix and SHAP.” Nature, 19 Nov. 2025 (retracted 5 Dec. 2025). Available: https://www.nature.com/articles/s41598-025-24662-9 ↵

- H. L. Goldin, “How to spot AI hallucinations like a reference librarian,” Card Catalogue, 16 Dec. 2025. Available: https://cardcatalogforlife.substack.com/p/how-to-spot-ai-hallucinations-like ↵

- B. Klimova and M. Pikhart, "Exploring the effects of artificial intelligence on student and academic well-being in higher education: A mini-review." Frontiers in Psychology, vol. 3(16), 2025. doi: 10.3389/fpsyg.2025.1498132 ↵

- N. Kosmyna, and E. Hauptman Eugene, “Your Brain on ChatGPT: Accumulation of Cognitive Debt when using an AI Assistant for Essay Writing Task" (online Summary). 2025 ↵

- K. Budzyn, et al., (Oct 2025). "Endoscopist deskilling risk after exposure to artificial intelligence in colonoscopy: A multicentre, observational study." The Lancet: Gastroenterology & Hepatology, vol. 10 (10), 2025, pp. 896-903. ↵

- J. Anderson, "I went all in on AI. The MIT Study is right." The Leadership Lighthouse (Substack), Oct. 2025. ↵

- A. Challapally et al., "The GenAI Divide: State of AI in Business 2025." MIT Nanda, July 2025. ↵

- E. Mollick, "Centaurs and Cyborgs on the Jagged Frontier." One Useful Thing, 2023. ↵

- C. Doctorow, "Humans are not perfectly vigilant, and that’s bad news for AI." Medium, April 2024. ↵

- M. Alley, "Strong examples of AI Writing in Engineering and Science." Writing as an Engineer or Scientist, Penn State, 2025. ↵

- C. Pollon, (2025) "Big Tech Is Hiding the Environmental Cost of Chatbots." The Walrus, Oct 2025 ↵

- K. Crawford, "Generative AI’s environmental costs are soaring — and mostly secret," Nature, Feb 2024. ↵

- A. Privette, "AI's challenging waters," University of Illinois - Civil and Environmental Engineering, Center for Secure Water, Oct 2024. ↵

- IEDC (March 2025). Artificial Intelligence Impacts on Labour Markets: Literature Review, March 2025. ↵

- B. Perrigo, "OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic." Time Magazine, Jan 2023. ↵

- D. Rojas, "Gruelling, low-paid human work behind generative AI curtain." BNN Bloomberg, Oct 2025. ↵

- R.L. Potter and T. Hylton, "Generative AI in Content Creation," Technical Writing Essentials NCSS Edition, ↵