Chapter 15: A/B Testing

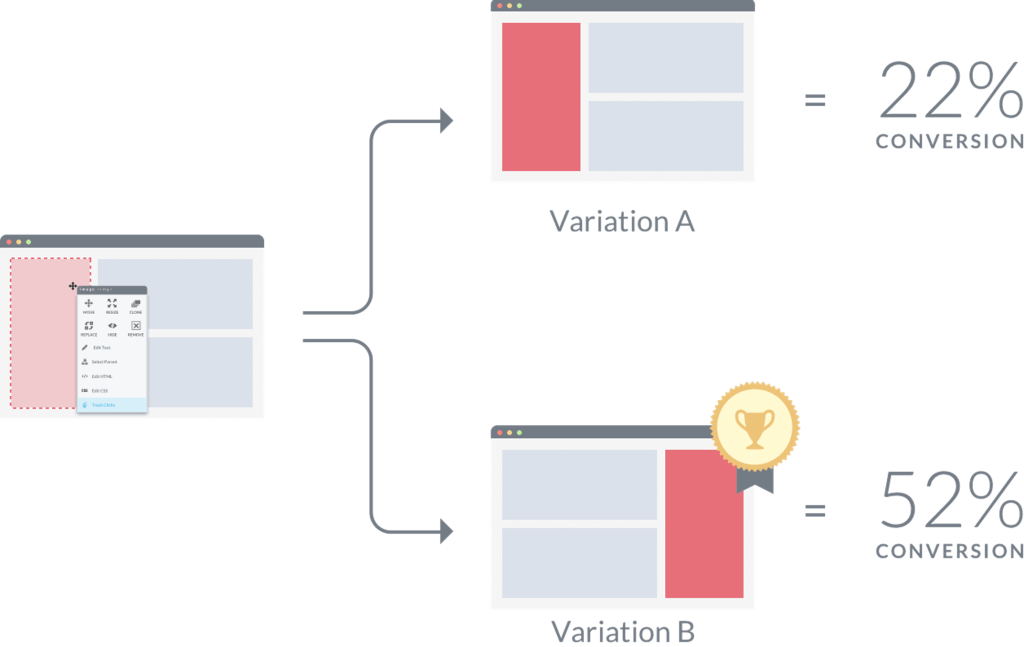

In its simplest form, A/B testing (also known as split testing) is the process of comparing two variations of a message (e.g., a webpage element, an email, a fundraising letter), usually by testing users’ responses to two versions of the same message (known as “Variant A” and “Variant B”) and concluding which of the two versions is more effective.

Why You Should A/B

Many marketing, fundraising, and communications departments rely on A/B testing because it is one of the most effective ways to tailor your approach to target audiences. While data and trend analyses are important elements in designing engaging web pages or email broadcasts, A/B testing provides practical, tangible evidence of your most impactful communications techniques.

Modern online metrics and analytics also create convenient evaluation information, so you will accurately assess your A/B testing. With analytics, you can easily monitor key metrics:

- how many people were exposed to each version

- how long they interacted and engaged

- what percentage completed the intended task and converted

An A/B test takes a significant amount of guesswork out of communications. For example, if you think of a new fundraising approach that could improve your organization’s revenue stream, you can set up an A/B test and then monitor the data.

If you’re right and the new option gets better results, you can move forward with your new plan. If the original technique still performs better, you can continue with your regular routine and think of different approaches in the future.

Ultimately, A/B testing helps you make decisions based on data. This way you know when a particular tactic or campaign is working or, more importantly, when one is failing.

Since every organization is different, don’t assume that what worked for others will automatically work for you. When you A/B test your activities and content, experiment with different ideas. This is useful because sometimes a small change can make a big difference in the results you get.

Common Areas to A/B Test

While not exhaustive, the following list presents several common areas where communicators use A/B testing:

- Headlines and subheadings

Whether the “titles” on a webpage, headlines for a piece of content, or the subject line of your email, you may want to experiment with these to see if small changes make a difference in engagement rates. - Copy

Copy is simply the text that you have written in your communications materials. While copy can include the words and text itself, copy can also include the location of your text. Presenting two options and seeing which version is more engaging and/or converts more people could be an area to explore. - Form design

When you look at your forms and the fields to be filled out, think about the number of fields and the information requested to see what potential leads submit and when completion rates drop significantly. - Calls to action

As marketers, having compelling calls to action is critical to getting leads to convert. Testing a variety of options provides data on which words deliver the best results. Here’s an article explaining how you can test CTAs using Hubspot. - Images

Like calls to action, you can test different images and image types. For example, try different photos to see which one(s) capture your audience’s interest more. You might even explore different types of imagery, e.g., photos, icons, avatars, emojis, and so on. - Colours

And finally, sometimes colour can have an impact in how your target audience behaves. Testing contrasting colours, complementary colours, or brighter colours can often provide insights as to what catches your target audience’s attention and what they prefer.

For more A/B testing ideas, read 60 A/B Testing Examples to Get You More Conversions.

How to Conduct an A/B Test

- Gather insights

Before starting your split tests, gather any information you have about your customers, donors, or other audience members (as the case may be). This can include both direct observations about your audiences and industry-insight data about ideal characteristics related to your target audience’s preferences. The more detailed your understanding of potential audiences, the easier earning positive results will be. (Remember your audience archetypes? Here’s a chance to study them and update them.) - Set your goals

Not all marketing activities have the same goals. For example, the metrics you will monitor for a brand awareness campaign will be different than those for a campaign focused on maximizing sales. A stated goal clarifies which metrics to focus on.

- Build your variations

Although most content systems will allow you to perform A/B tests with many different variants, it is usually best to limit each round of testing to two or three variations. This allows you to quickly hit sufficient traffic numbers or volume for each option so you can compare them. Doing so also isolates differences in the options to determine which technique led to better results. - Run A/B tests

After building your variants, launch them and allow the A/B tests to run. There is no perfect sample size. Instead, the right size depends on your priorities. Targeting a smaller sample size will allow you to analyze your results and adjust more quickly—and at a lower cost—while targeting a larger sample size provides you with more information to support long-term decisions. Clearly, there are benefits and drawbacks to each approach. So, the decision will be based largely on your goals, objectives, and needed levels of confidence. - Analyze the results

Once you have gathered enough data to make an informed decision, you can begin looking at the data gathered. Make note of the key metrics you are tracking as a priority, but do not entirely ignore other metrics. For example, if you are running a campaign to build brand awareness and discover that one version is generating significantly higher sales numbers, while this may not make it the ideal option for the current campaign, it still provides valuable information you can use to monetize other campaigns. The winning option is the one that is performing best, and that version should become the basis for your activities as you move forward. - Make adjustments as needed

After you discover which option won the A/B test, adjust your activities as needed. If the metrics for the winning variant are clear, expose your entire target audience to that option. If it is somewhat unclear which option is clearly the winner, perform a new A/B test by adding in new options and test against the leading variation. When testing new versions against the previously leading variation, take results from the first test into account when deciding on the best option. - Continue monitoring

Even after multiple rounds of A/B testing, you will still need to regularly monitor your activities to ensure they are still effective. Tactics will commonly reach points of diminishing returns, either as the content becomes less relevant or you begin to run out of potential ideas for a specific campaign. Make a habit of monitoring the campaign’s performance, even after you have found a winning variant and exposed the entire audience to it. Should performance begin to slip, you can end the campaign or return to Step 1 and go through a new round of A/B testing.

Multivariate Testing

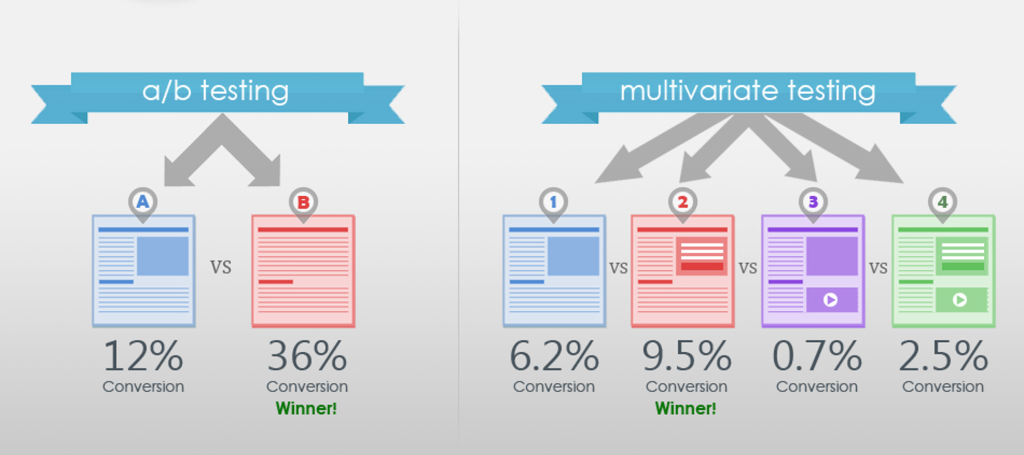

Multivariate testing is a technique for testing a hypothesis in which multiple variables are modified. The goal of multivariate testing is to determine which combination of variations performs the best out of all of the possible combinations (see image below).

Tools for A/B Testing

Now that you understand what A/B testing is and how to conduct these tests, the following links provide a few frequently used tools for A/B testing:

(Please note that the tools listed below are all free or offer free trials.)

- Kameleoon – Kameleoon is an advanced optimization platform that offers a variety of features. Using Kameleoon, you can run advanced A/B, split, and multivariate tests quickly and easily. You can also see key user insights with their navigation analysis tool and test different user segments with over 40 targeting criteria. Dynamic traffic-allocation algorithms also make sure your traffic is optimally divided in order to shorten your decision cycle and improve return on investment.

- Symplify (formerly SiteGainer) – Symplify is a company that offers a full suite of conversion optimization tools, including A/B testing, multivariate testing, personalization, heat maps, popups, and surveys. They also have a team of experts to assist you with test ideas, design, programming, analytics, and personalization.

The A/B testing software market is in a healthy place, with solutions for every size and type of business. Most of the options include a free trial (some as long as 60 days), so there is no reason not to try them for yourself.

A/B Testing – Additional Resources

Below are some articles with more details about A/B testing:

Media Attributions

- A/B testing overview by guest author on Techno FAQ is licensed under a CC BY-NC-SA 4.0 licence.

- What is A/B Testing? | Data Science in Minutes by Data Science Dojo is licensed under a Standard YouTube License.

- Multivariate A/B tests by Navot is licensed under a CC BY-SA 4.0 licence.

Attributions

This chapter was adapted from Foundations in Digital Marketing: Building Meaningful Customer Relationships and Engaged Audiences by Rochelle Grayson, which is licensed under a Creative Commons Attribution 4.0 International License, except where otherwise noted.