9.4 Artificial intelligence

9.4.1 Focusing on AI’s affordances for teaching and learning

Artificial intelligence (AI) is a daunting topic as there are so many issues with respect to its use in education. AI is also currently going through yet another period of extreme hype as a panacea for education, currently being at the top of the peak of inflated expectations, but this hype is driven mainly by successful applications outside the field of education, such as in finance, marketing and medical research. Furthermore the term ‘AI’ is increasingly being used (incorrectly) as a general term for any complex computational activity.

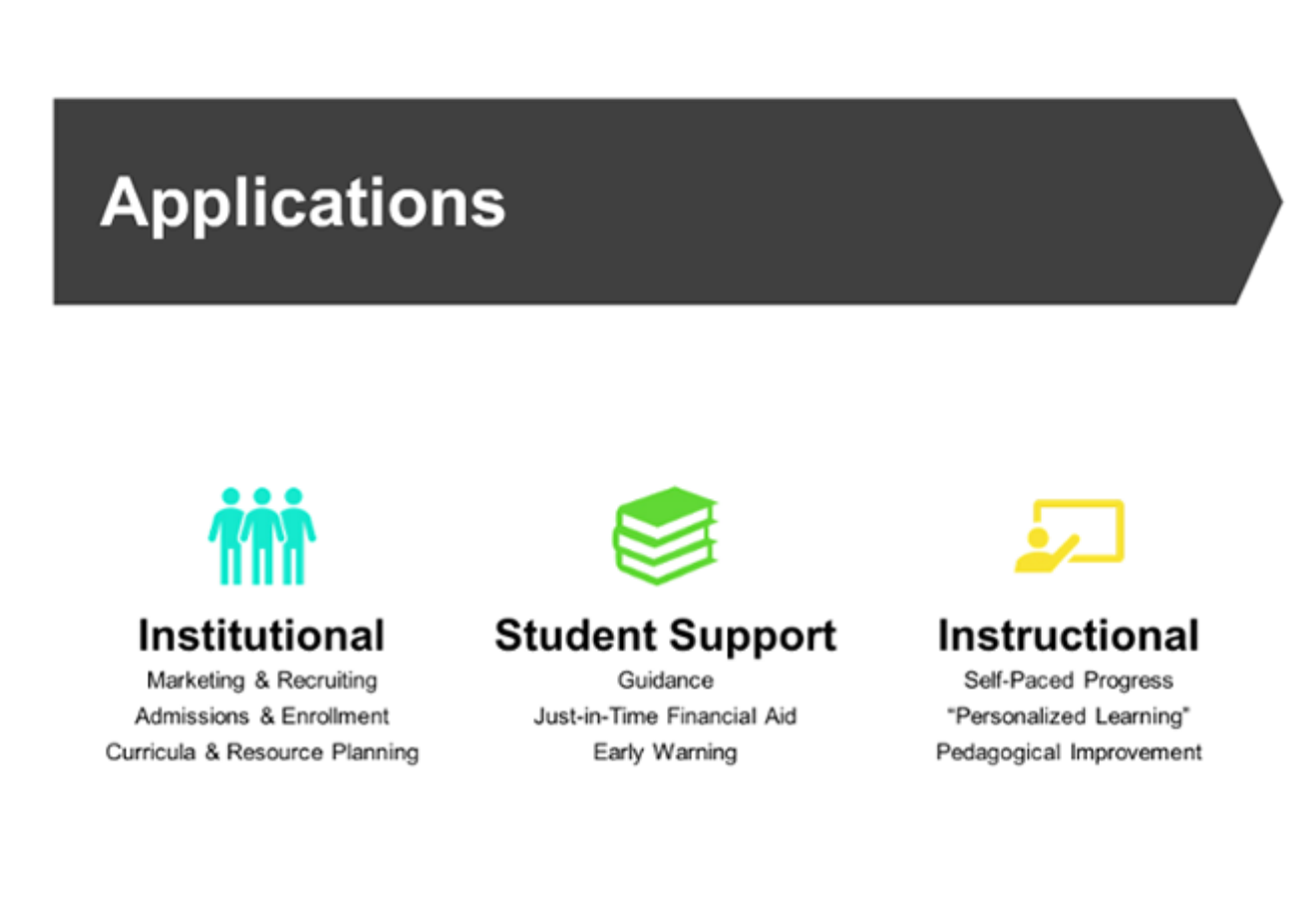

Even in education, there are very different possible areas of application of AI. Zeide (2019) makes a very useful distinction between institutional, student support and instructional applications (Figure 9.4.2 below).

Although AI applications for institutional or student support purposes are very important, this chapter is focused on the pedagogical affordances of different media and technologies (what Zeide calls ‘instructional’ applications). In particular, the focus in this section will be on the role of AI as a form of media or technology for teaching and learning, its pedagogical affordances, and its strengths and weaknesses in this area.

Moreover, AI is really a sub-set of computing. Thus all the general affordances of computing in education set out in Chapter 8, Section 5 will apply to AI. This section aims to tease out the extra potential that AI can offer in teaching and learning. This will mean particularly focusing on its role as a medium rather than a general technology in teaching, which means looking at a wider context than just the computational aspects of AI, in particular its pedagogical role.

9.4.2 What is artificial intelligence?

The original definition of artificial intelligence by McCarthy (1956, cited in Russell & Norvig, 2010) is:

every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it. An attempt will be made to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves.

Zawacki-Richter et al. (2019), in a review of the literature on AI in higher education, report that those authors that defined artificial intelligence tended to describe it as:

intelligent computer systems or intelligent agents with human features, such as the ability to memorise knowledge, to perceive and manipulate their environment in a similar way as humans, and to understand human natural language.

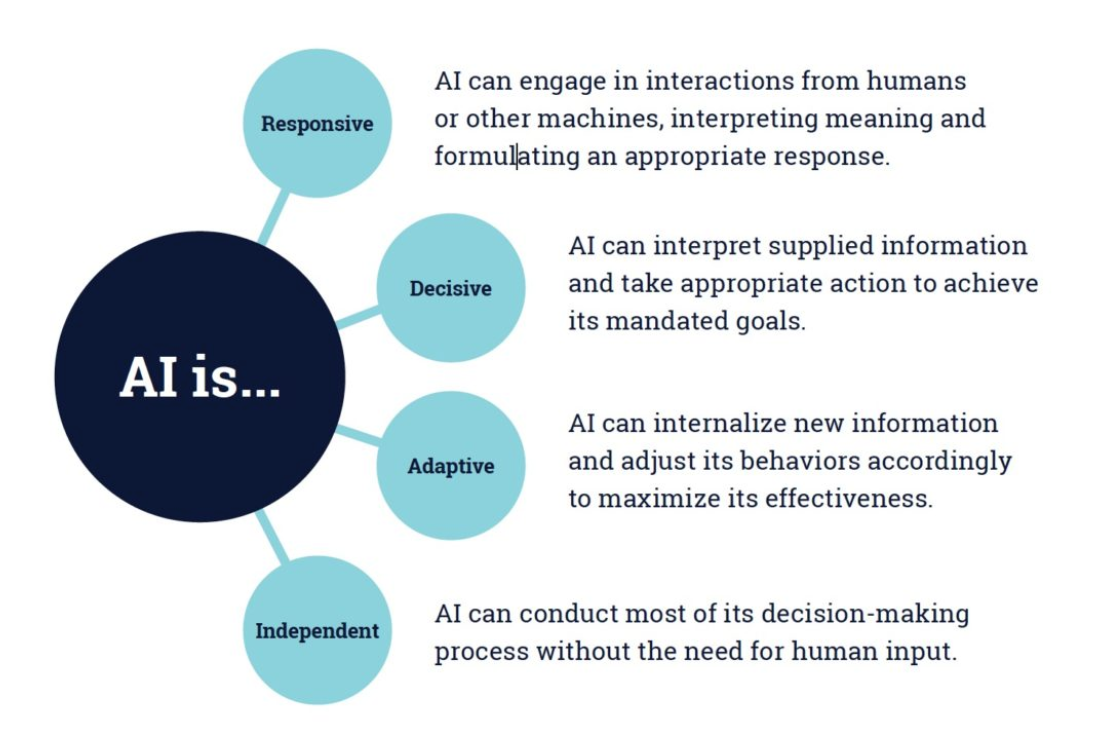

Klutka et al. (2018) also defined AI in terms of what it can do in higher education (Figure 9.4.3 below):

There are three basic computing requirements that set ‘modern’ AI apart from other computing applications:

- access to massive amounts of data;

- computational power on a large scale to manage and analyze the data;

- powerful and relevant algorithms for the data analysis.

9.4.3 Why use artificial intelligence for teaching and learning?

There are two somewhat different goals for the general use of artificial intelligence. The first is to increase the efficiency of a system or organization, primarily by reducing the high costs of labour, namely by replacing relatively expensive human workers with relatively less costly machines (automation). Politicians, entrepreneurs and policy makers increasingly see a move to automation as a way of reducing the costs of education. However, in education in particular, teachers and instructors are the main cost.

The second is to increase the effectiveness of teaching and learning, in economic terms to increase outputs: better learning outcomes and greater benefits for the same or more cost. With this goal, AI would be used alongside or supporting teachers and instructors.

Klutka et al. (2018) provided a general statement of the potential of AI in higher education ‘instruction’ through Figure 9.4.4:

These are understandable goals, but we shall see later in this section that such goals to date are mainly aspirational rather than real.

In terms of this book, a key focus is on developing the knowledge and skills required by learners in a digital age. The key test then for artificial intelligence is to what extent it can assist in the development of these higher level skills.

9.4.4 Affordances and examples of AI use in teaching and learning

Zawacki-Richter et al. (2019) in a review of the literature on AI in education initially identified 2,656 research papers in English or Spanish, then narrowed the list down by eliminating duplicates, limiting publication to articles in peer-reviewed journals published between 2007 and 2018, and eliminating articles that turned out in the end not to be about the use of AI in education. This resulted in a final 145 articles which were then analysed. Zawacki-Richter et al. then classified these 145 papers into different uses of AI in education. This section draws heavily on this classification. (It should be noted that within the 145 articles, only 92 were focused on instruction/student support. The rest were on institutional uses such as identifying at risk students before admission).

The Zawacki-Richter study offers one insight into the main ways that AI has been used in education for teaching and learning over the ten years between 2007 and 2018, the closest we can come to ‘affordances’. First, three main general ‘instructional’ categories (with considerable overlap) from the study are provided below, followed by some specific examples. (I have omitted Zawacki-Richter et al.’s category of profiling and prediction concerned with administrative issues such as admissions, course scheduling, and early warning systems for students at risk.)

9.4.4.1 Intelligent tutoring systems (29 out of 92 articles reviewed by Zawacki-Richter et al.)

Intelligent tutoring systems:

- provide teaching content to students and, at the same time, support them by giving adaptive feedback and hints to solve questions related to the content, as well as detecting students’ difficulties/errors when working with the content or the exercises;

- curate learning materials based on student needs, such as by providing specific recommendations regarding the type of reading material and exercises done, as well as personalised courses of action;

- facilitate collaboration between learners, for instance, by providing automated feedback, generating automatic questions for discussion, and the analysis of the process.

9.4.4.2 Assessment and evaluation (36 out of 92)

AI supports assessment and evaluation through:

- automated grading;

-

feedback, including a range of student-facing tools, such as intelligent agents that provide students with prompts or guidance when they are confused or stalled in their work;

-

evaluation of student understanding, engagement and academic integrity.

9.4.4.3 Adaptive systems and personalization (27 out of 92)

AI enables adaptive systems and the personalization of learning by:

- teaching course content then diagnosing strengths or gaps in student knowledge, and providing automated feedback;

- recommending personalized content;

- supporting teachers in learning design by recommending appropriate teaching strategies based on student performance;

- supporting representation of knowledge in concept maps.

Klutka et al. (2018) identified several uses of AI for teaching and learning in universities in the USA.

- ECoach, developed at the University of Michigan, provides formative feedback for a variety of mainly large classes in the STEM field. It tracks students progress through a course and directs them to appropriate actions and activities on a personalized basis

- sentiment analysis (using students’ facial expressions to measure their level of engagement in studying),

- an application to monitor student engagement in discussion forums, and

- organizing commonly shared mistakes in exams into groups for the instructor to respond once to the group rather than individually.

9.4.4.4 Chatbots

A chatbot is programming that simulates the conversation or ‘chatter’ of a human being through text or voice interactions (Rouse, 2018). Chatbots in particular are a tool used to automate communications with students. Bayne (2014) describes one such application in a MOOC with 90,000 subscribers. Much of the student activity took place outside the Coursera platform within social media. The five academics teaching the MOOC were all active on Twitter, each with large networks, and Twitter activity around the MOOC hashtag (#edcmooc) was high across all instances of the course (for example, a total of around 180,000 tweets were exchanged on the first offering of the MOOC). A ‘Teacherbot’ was designed to roam the tweets using the course Twitter hashtag, using keywords to identify ‘issues’ then choosing pre-designed responses to these issues, which often entailed directing students to more specific research on a topic. For a review of research on chatbots in education, see Winkler and Söllner (2018).

9.4.4.5 Automated essay grading

Thompson (2022) provides a simple explanation, aimed mainly at teachers, of how automated essay scoring (AES) works.

The first and most critical thing to know is that there is not an algorithm that “reads” the student essays. Instead, you need to train an algorithm….You have to actually grade the essays (or at least a large sample of them) and then use that data to fit a machine learning algorithm.

This means identifying the rubrics you use in grading essays. You then mark a large number of essays to determine the weight (say on a five point scale) that you assign to each rubric to grade each assignment. You try several AI automated essay grading models and run your assignments through those models a number of times to see which correlates best with your own grading. The models will in fact ‘learn’ to get better the more essays you run through them.

As Thompson puts it:

There is a trade-off between simplicity and accuracy. Complex models might be accurate but take days to run. A simpler model might take 2 hours but with a 5% drop in accuracy….The general consensus in research is that AES algorithms work as well as a second human, and therefore serve very well in that role. But you shouldn’t use them as the only score.

Natural language processing (NLP) artificial intelligence systems – often called automated essay scoring engines – are now either the primary or secondary grader on standardized tests in at least 21 states in the USA (Feathers, 2019). According to Feathers:

Essay-scoring engines don’t actually analyze the quality of writing. They’re trained on sets of hundreds of example essays to recognize patterns that correlate with higher or lower human-assigned grades. They then predict what score a human would assign an essay, based on those patterns.

Feathers though claims that research from psychometricians and AI experts show that these tools are susceptible to a common flaw in AI: bias against certain demographic groups (see Ongweso, 2019).

Lazendic et al. (2018) offer a detailed account of the plan for machine grading in Australian high schools. They state:

It is …crucially important to acknowledge that the human scoring models, which are developed for each NAPLAN writing prompt, and their consistent application, ensure and maintain the validity of NAPLAN writing assessments. Consequently, the statistical reliability of human scoring outcomes is fundamentally related to and is the key evidence for the validity of NAPLAN writing marking.

In other words, the marking must be based on consistent human criteria. However, it was announced later (Hendry, 2018) that Australian education ministers agreed not to introduce automated essay marking for NAPLAN writing tests, heeding calls from teachers’ groups to reject the proposal.

Perelman (2013) developed a computer program called the BABEL generator that patched together strings of sophisticated words and sentences into meaningless gibberish essays. The nonsense essays consistently received high, sometimes perfect, scores when run through several different scoring engines. See also Mayfield, 2013, for a thoughtful analysis of the issues in the automated marking of writing. For a good description of where automated essay scoring is headed, see Kumar and Boulanger (2020).

AES may eventually have potential for marking massive numbers of assignments for nation-wide standard examinations such as the NAPLAN in Australia or the General Certificate of Secondary Education in the U.K., but such methods are still impractical for most individual teachers or instructors. At the time of writing, despite considerable pressure to use automated essay grading for standardized exams, the technology still has many questions lingering over it.

9.4.4.6 Online proctoring

Especially as a result of the Covid-19 pandemic, there has been a rapid increase in the use of AI-based proctoring services to verify whether students taking exams at home are cheating. There is a surprisingly large number of online proctoring companies, such as Examity, Mercer/Mettle, Proctortrack, OnVUE (from Pearson Publishing), Meazure Learning (formerly ProctorU), and Proctorio. These use cameras installed either in the students’ computer or provided by the proctoring company to be used in th students’ home or wherever the exam is taken. Most online proctoring comes in two forms: live, with a remote person watching (usually contracted by the proctoring company); or automated. Sometimes there is a mix of both.

Increasingly, these services use AI to identify possible proxies for cheating behaviour, such as

- the students’ face not matching a photo ID uploaded prior to the exam,

- ‘distraction’: movement of the student during the exam beyond the limit of the camera

- other people in the room

- extraneous human sound

- books or other documents on the desk

- a 360 degree view of the room where the student is taking the exam.

Some companies create, through the use of AI, a ‘credibility index’ as a result. Students usually have to provide personal data, such as name, address, student number and sometimes credit card information. Students – or even the institution or school requiring the use of the proctoring service – have no control over the use of this personal data, which can be and is often shared with third parties.

The sensitive information collected by online proctoring companies has raised many concerns among students – and parents, who are automatically excluded from the exam process.

Nigam et al. (2021) systematically reviewed 43 papers on AI- and non-AI based proctoring systems published between 2015 and 2021. were listed out from the year 2015 to 2021. They report:

‘Our analysis …reveals that security issues associated with AIPS are multiplying and are a cause of legitimate concern. Major issues include security and privacy concerns, ethical concerns, trust in AI-based technology, lack of training among usage of technology, cost and many more. It is difficult to know whether the benefits of these online proctoring technologies outweigh their risks. The most reasonable conclusion we can reach in the present is that the ethical justification of these technologies and their various capabilities requires us to rigorously ensure that a balance is struck between these concerns and the possible benefits.’

Online proctoring is a good example of trying to adapt 19th century methods to 21st century technology. Online assessment is discussed more fully in Chapter 6.8.4, which indicated that assessment can be done differently with online learning, using for instance continuous assessment, as student learning is automatically tracked through an LMS, or ePortfolios, which allow students to create an authentic digital portfolio of work. What should be avoided is the intrusiveness, lack of privacy, and lack of transparency that come with AI-based proctoring services.

9.4.5 Strengths and weaknesses

There are several ways to assess the value of the teaching and learning affordances of particular applications of AI in teaching and learning:

- is the application based on the three core features of ‘modern’ AI: massive data sets, massive computing power; powerful and relevant algorithms?

- does the application have clear benefits in terms of affordances over other media, and particularly general computing applications?

- does the application facilitate the development of the skills and knowledge needed in a digital age?

- is there unintended bias built into the algorithms? Does it appear to discriminate against certain categories of people?

- is the application ethical in terms of student and teacher/instructor privacy and their rights in an open and democratic society?

- are the results of the application ‘explainable’? For example, can a teacher or instructor or those responsible for the application understand and explain to students how the results or decisions made by the AI application were reached?

These issues are addressed below.

9.4.5.1 Is it really a ‘modern’ AI application in teaching and learning?

Looking at the Zawacki-Richter et al. study and many other research papers published in peer-reviewed journals, very few so-called AI applications in teaching and learning meet the criteria of massive data, massive computing power and powerful and relevant algorithms. Much of the intelligent tutoring within conventional education is what might be termed ‘old’ AI: there is not a lot of processing going on, and the data points are relatively small. Many so-called AI papers focused on intelligent tutoring and adaptive learning are really just general computing applications.

Indeed, so-called intelligent tutoring systems, automated multiple-choice test marking, and automated feedback on such tests have been around since the early 1980s. The closest to modern AI applications appear to be automated essay grading of standardised tests administered across an entire education system, and use of AI in online proctoring. However there are major problems with both such applications. More development is clearly needed to make automated essay grading and AI-based online proctoring more reliable and secure.

The main advantage that Klutka et al. (2018) identify for AI is that it opens up the possibility for higher education services to become scalable at an unprecedented rate, both inside and outside the classroom. However, it is difficult to see how ‘modern’ AI could be used within the current education system, where class sizes or even whole academic departments, and hence data points, are relatively small, in terms of the numbers needed for ‘modern’ AI. It cannot be said to date that modern AI has been tried, and failed, in teaching and learning; it’s not really even been tried.

Applications outside the current formal education systems are more realistic, for MOOCs, for instance, or for corporate training on an international scale, or for distance teaching universities with very large numbers of students. The requirement for massive data does suggest that the whole education system could be massively disrupted if the necessary scale could be reached by offering modern AI-based education outside the existing education systems, for instance by large Internet corporations that could tap into and use personal data from their massive markets of consumers.

However, there is still a long way to go before AI makes that feasible. This is not to say that there could not be such applications of modern AI in the future, but at the moment, in the words of the old English bobby, ‘Move along, now, there’s nothing to see here.’

However, for the sake of argument, let’s assume that the definition of AI offered here is too strict and that most of the applications discussed in this section are examples of AI. How do these applications of AI meet the other criteria above?

9.4.5.2 Do the applications facilitate the development of the skills and knowledge needed in a digital age?

This does not seem to be the case in most so-called AI applications for teaching and learning today. They are heavily focused on content presentation and testing for understanding and comprehension. In particular, Zawacki-Richter et al. make the point that most AI developments for teaching and learning – or at least the research papers – are by computer scientists, not educators. Since AI tends to be developed by computer scientists, they tend to use models of learning based on how computers or computer networks work (since of course it will be a computer that has to operate the AI). As a result, such AI applications tend to adopt a very behaviourist model of learning: present/test/feedback. Lynch (2017) argues that:

If AI is going to benefit education, it will require strengthening the connection between AI developers and experts in the learning sciences. Otherwise, AI will simply ‘discover’ new ways to teach poorly and perpetuate erroneous ideas about teaching and learning.

Comprehension and understanding are indeed important foundational skills, but AI so far is not helping with the development of higher order skills in learners of critical thinking, problem-solving, creativity and knowledge-management. Indeed, Klutka et al. (2018) claim that that AI can handle many of the routine functions currently done by instructors and administrators, freeing them up to solve more complex problems and connect with students on deeper levels. This reinforces the view that the role of the instructor or teacher needs to move away from content presentation, content management and testing of content comprehension – all of which can be done by computing – towards skills development. The good news is that AI used in this way supports teachers and instructors, but does not replace them. The bad news is that many teachers and instructors will need to change the way they teach or they will become redundant.

9.4.5.3 Is there unintended bias in the algorithms?

It could be argued that all AI does is to encapsulate the existing biases in the system. The problem though is that this bias is often hard to detect in any specific algorithm, and that AI tends to scale up or magnify such biases. These are issues more for institutional uses of AI, but machine-based bias can discriminate against students in a teaching and learning context as well, and especially in automated assessment.

9.4.5.4 Is the application ethical?

There are many potential ethical issues arising from the use of AI in teaching and learning, mainly due to the lack of transparency in the AI software, and particularly the assumptions embedded in the algorithms. The literature review by Zawacki-Richter et al. (2019) concluded:

…a stunning result of this review is the dramatic lack of critical reflection of the pedagogical and ethical implications as well as risks of implementing AI applications in higher education.

What data are being collected, who owns or controls it, how is it being interpreted, how will it be used? Policies will need to be put in place to protect students and teachers/instructors (see for instance the U.S. Department of Education’s student data policies for schools or British Columbia’s Ministry of Advanced Education and Skills Training’s Digital Learning Strategy). Students and teachers/instructors need to be involved in such policy development.

9.4.5.5 Are the results explainable?

The biggest problem with AI generally, and in teaching and learning in particular, is the lack of transparency. Why did it give me this grade? Why I am directed to this reading rather than that one or re-directed to a reading I didn’t understand the first time?? Why isn’t my answer acceptable? Lynch (2017) argues that most data collected about student learning is indirect, inauthentic, lacking demonstrable reliability or validity, and reflecting unrealistic time horizons to demonstrate learning.

‘current examples of AIEd often rely on …. poor proxies for learning, using data that is easily collectable rather than educationally meaningful.’

9.6 Conclusions

9.6.1 Dream on, AI enthusiasts

In terms of what AI is actually doing now for teaching and learning, the dream is way beyond the reality. What works well in finance or marketing or astronomy does not necessarily translate to teaching and learning contexts. In doing the research for this section, it proved very difficult to find any compelling examples of AI for teaching and learning, compared with serious games or virtual reality. It is always hard to prove a negative, but the results to date of applying AI to teaching and learning are extremely limited and disappointing (see, for instance, Brooks, 2021).

This is mainly due to the difficulty of applying ‘modern’ AI at scale in a very fragmented system that relies heavily on relatively small class sizes, programs, and institutions. Probably for modern AI to ‘work’, a totally different organizational structure for teaching and learning would be needed. But be careful what you wish for.

There is a strong affective or emotional influence in learning. Students often learn better when they feel that the instructor or teacher cares. In particular, students want to be treated as individuals, with their own interests, ways of learning, and some sense of control over their learning. Although at a mass level human behaviour is predictable and to some extent controllable, each student is an individual and will respond slightly differently from other students in the same context. Because of these emotional and personal aspects of learning, students need to relate in some way to their teacher or instructor. Learning is a complex activity where only a relatively minor part of the process can be effectively automated. Learning is an intensely human activity, that benefits enormously from personal relationships and social interaction. This relational aspect of learning can be handled equally well online as face-to-face, but it means using computing to support communication as well as delivering and testing content acquisition.

9.6.2 Not fit for purpose

Above all, AI has not progressed to the point yet where it can support the higher levels of learning required in a digital age or the teaching methods needed to do this, although other forms of computing or technology, such as simulations, games and virtual reality, can.

In particular AI developers have been largely unaware that learning is developmental and constructed, and instead have imposed an old and less appropriate method of teaching based on behaviourism and an objectivist epistemology. However, to develop the skills and knowledge needed in a digital age, a more constructivist approach to learning is needed. There has been no evidence to date that AI can support such an approach to teaching, although it may be possible.

9.6.3 The real agenda of AI advocates

AI advocates often argue that they are not trying to replace teachers but to make their life easier or more efficient. This should be taken with a pinch of salt. The key driver of AI applications is cost-reduction, which means reducing the number of teachers, as this is the main cost in education. In contrast, the key lesson from all AI developments is that we will need to pay increased attention to the affective and emotional aspects of life in a robotic-heavy society, so teachers will become even more important.

Another problem with artificial intelligence is that the same old hype keeps going round and round. The same arguments for using artificial intelligence in education go back to the 1980s. Millions of dollars went into AI research at the time, including into educational applications, with absolutely no payoff.

There have been some significant developments in AI since then, in particular pattern recognition, access to and analysis of big data sets, powerful algorithms, leading to formalized decision-making within limited boundaries. The trick though is to recognise exactly what kind of applications these new AI developments are good for, and what they cannot do well. In other words, the context in which AI is used matters, and needs to be taken account of. Teaching and learning is a particularly difficult environment then for AI applications.

9.6.4 Defining AI’s role in teaching and learning

Nevertheless, there is plenty of scope for useful applications of AI in education, but only if there is continuing dialogue between AI developers and educators as new developments in AI become available. But that will require being very clear about the purpose of AI applications in education and being wide awake to the unintended consequences.

In education, AI is still a sleeping giant. ‘Breakthrough’ applications of AI for teaching and learning are probably not going to come from the mainstream universities and colleges, but from outside the formal post-secondary system, through organizations such as LinkedIn, lynda.com, Amazon or Coursera, that have access to large data sets that make the applications of AI scalable and worthwhile (to them). However, this would pose an existential threat to public schools, colleges and universities. The issue then becomes: what system is best to protect and sustain the individual in a digital age: multinational corporations using AI for teaching and learning; or a public education system with human teachers using AI as a support for learners?

The key question then is whether technology should aim to replace teachers and instructors through automation, or whether technology should be used to empower not only teachers but also learners. Above all, who should control AI in education: educators, students, computer scientists, or large corporations? These are indeed existential questions if AI does become immensely successful in reducing the costs of teaching and learning: but at what cost to us as humans? Fortunately AI is not yet in a position to provide such a threat; but it may well do so soon.

References

Bayne, S. (2014) Teacherbot: interventions in automated teaching Teaching in Higher Education, Vol. 20. No.4

Brooks, D.C. (2021) EDUCAUSE QuickPoll Results: Artificial Intelligence Use in Higher Education, EDUCAUSE Review, June 11

Feathers, T. (2019) Flawed Algorithms Are Grading Millions of Students’ Essays, Motherboard: Tech by Vice, 20 August

Hendry, J. (2018) Govts dump NAPLAN robo marking plans itnews, 30 January

Klutka, J. et al. (2018) Artificial Intelligence in Higher Education: Current Uses and Future Applications Louisville Ky: Learning House (accessed 19 April, 2019, but no longer available.)

Koumar, V. and Boulanger, D. (2020) Explainable Automated Essay Scoring: Deep Learning Really Has Pedagogical Value Frontiers in Education, 6 October

Lazandic, G., Justus, J.-A., and Rabinowitz, S. (2018) NAPLAN Online Automated Scoring Research Program: Research Report, Canberra, Australia: Australian Curriculum, Assessment and Reporting Authority

Lynch, J. (2017) How AI will destroy education, buZZrobot, 13 November (accessed 15 February, 2019 – no longer available.)

Mayfield, E. (2013) Six ways the edX Announcement Gets Automated Essay Grading Wrong, e-Literate, April 8

Nigam, A., Pasricha, R., Singh, T. and Churi, P. (2021) A Systematic Review on AI-based Proctoring Systems: Past, Present and Future, Education and Information Technologies, Vol. 26, June 23

Ongweso jr. E. (2019) Racial Bias in AI Isn’t Getting Better and Neither Are Researchers’ Excuses Motherboard: Tech by Vice, July 29

Perelman. L. (2013) Critique of Mark D. Shermis & Ben Hamner, Contrasting State-of-the-Art Automated Scoring of Essays: Analysis, Journal of Writing Assessment, Vol. 6, No.1

Rouse, M. (2019) What is chatbot? Techtarget Network Customer Experience, 5 January

Russell, S. and Norvig, P. (2010) Artificial Intelligence – A Modern Approach New Jersey: Pearson Education

Thompson, N. (2022) What is automated essay scoring?, ASC, April 22

Winkler, R. & Söllner, M. (2018): Unleashing the Potential of Chatbots in Education: A State-Of-The-Art Analysis. Academy of Management Annual Meeting (AOM) Chicago: Illinois

Zawacki-Richter, O. er al. (2019) Systematic review of research on artificial intelligence applications in higher education – where are the educators? International Journal of Technology in Higher Education Vo.16, No. 39

Zeide, E. (2019) Artificial Intelligence in Higher Education: Applications, Promise and Perils, and Ethical Questions EDUCAUSE Review, Vol. 54, No. 3, August 26

Activity 9.4 Assessing artificial intelligence

- what do you think about AI for teaching and learning? Is it so esoteric that you can safely not worry about it? Or do you feel you need to be better informed about that it can and cannot do?

- do you agree with the three minimum requirements for modern AI: large data sets, powerful computing capacity, and powerful algorithms? Are there other possible applications of AI that do not need to meet these three criteria?

- can you think of areas of teaching and learning that could generate large data sets even in a class of 30?

- what other skills beside comprehension could AI facilitate? How would it do this?

Click on the podcast below to get some feedback on these questions, plus some of my personal thoughts on AI and teaching and learning:

Feedback/Errata