Chapter 4 Measures of Dispersion

4.3 Variance

Similarly to how the median is about the central position of a case while the mean is about the average of actual numerical values, the range and interquartile range are about positions in the overall (ordered) distribution of cases while the remaining two dispersion measures, the variance and the standard deviation, are about averaging numerical values.

Thus, like the mean, the variance and the standard deviation account for all cases, not just a select few. Unlike the mean, however, instead of calculating the average of all values, the standard deviation and variance calculate (approximately) the average of the distances of each and every value to the mean.

The mean is a measure of central tendency, as you know by now, and it represent a sort of “centre” of the data, value-wise (as opposed to position-wise, which is what the median is). You know that all cases’ values enter the calculation of the mean (after all, we sum all values and divide the sum on their total number to get the mean), but, at the same time, the values are different from the mean. (That is, either all are different, or all but one — it’s possible that one of the values is actually what the mean is, in which case the difference is zero.)

This difference, between a value of a case and the mean, is what we call distance to the mean. We have to average these (by adding all of the distances of all cases’s values together and dividing by their total number) to obtain the variance and the standard deviation. Once we have these dispersion measures, we’ll be able to tell how all cases are spread out around the mean. This, in turn, gives us information about how much variability there is in a given variable’s cases, if they are dispersed or clustered together.

You’ll be glad to know that the variance and the standard deviation are calculated in almost the exact same way; the standard deviation needs just one additional mathematical operation after getting the variance. In a sense, they calculate the same thing but are expressed differently, and the standard deviation is usually considered easier to interpret.

This is all the good news I have for you at this point, I’m afraid, as what follows is a calculation process containing several steps. On the whole, it may look complicated though it really isn’t; the key is to not forget what you are doing and where you are in the process. If you find yourself losing track, simply go back and start from the beginning, paying attention to what steps you go through.

Variance. Since we want an average of the distances of the cases from the mean, it would make sense to start with getting these distances as a Step 1. Step 2 would be to add these distances together, then Step 3 would be to divide the sum on their total number. This is easier said that done, as you shall see (ominous foreshadowing!), so I’ll divide Step 1 into two sub-steps, Step 1A (getting the distances) and Step 2B (a procedure I’ll keep as a mystery for now).

As usual, we’ll do all this through an example. For simplicity’s sake, I’ll reuse Examples 4.2/4.3 from the previous section which we used to introduce the concept of IQR.

Example 4.4 (A) Weekly Hours Worked, Revisited

If you recall, we had imagined you as a research assistant (RA) on a research project and you had worked 20 weeks in total in the last two semesters, ten weeks in each semester. The maximum hours per week you could work was 15, limited by the nature of your contract.

As there are a lot of calculations to be done, to simplify our job, let’s imagine further that we’re interested in only one of the two semesters you had worked, and these are only the hours in the ten weeks of that one semester:

3, 3, 5, 7, 8, 10, 12, 12, 13, 14

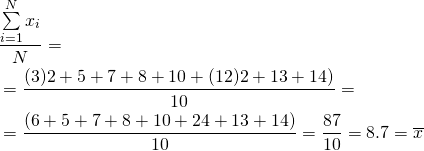

Considering that for Step 1A we need the distances of each of these ten values to the mean, we’ll calculate the mean as a preliminary requirement.[1]

Armed with the mean of 8.7 hours, we can now proceed to calculate the distance of every value to the mean (i.e., subtract the mean from each value to obtain the difference). I list the values and their respective distances from the mean in the table below.

Table 4.3 Step 1A Calculating Distances To the Mean

| 3 | (3 – 8.7) = -5.7 |

| 3 | (3 – 8.7) = -5.7 |

| 5 | (5 – 8.7) = -3.7 |

| 7 | (7 – 8.7) = -1.7 |

| 8 | (8 – 8.7) = -0.7 |

| 10 | (10 – 8.7) = 1.3 |

| 12 | (12 – 8.7) = 3.3 |

| 12 | (12 – 8.7) = 3.3 |

| 13 | (13 – 8.7) = 4.3 |

| 14 | (14 – 8.7) = 5.3 |

Again, as usual, ![]() is the value of each and any Case #

is the value of each and any Case #![]() (from 1 to 10), and

(from 1 to 10), and ![]() is the distance (i.e., difference) between each and any Case #

is the distance (i.e., difference) between each and any Case #![]() (from 1 to 10) to the mean.

(from 1 to 10) to the mean.

Now if we were to jump directly to Step 2 (summing all distances together) and Step 3 (dividing by the total number), we would be in trouble. You see, since the mean averages all values and provides a “centre” of the variable’s distribution value-wise, distances of the values below the mean equal the distances of the values above the mean, albeit with opposite signs.

That is, summing all values below the mean (i.e., the negative differences) would equal the sum of all values above the mean (i.e., the positive differences). As one sum is negative and the other positive (but with the same absolute value[2] ), they cancel each other out — adding them together would result in 0, every time. This is due to the very nature of the calculation of the mean; it’s a mathematical inevitability.

Don’t believe me? Try it. The sum of the distances below the mean is:

![]()

The sum of the distances above the mean is:

![]()

Thus, the sum of all distances from the mean is

![]()

Told you: Zero. Every. Time.[3]

So if the sum of the distances to the mean is always zero, then what? How are we to average those distances, since dividing the sum (i.e., zero) on any N would give us zero? Are we to give up?

The thing is, the distances (below and above the mean) only cancel each other out because we consider the distances below the mean as negative. This, however, is a somewhat of a mathematical conceptual artifact: in real life, there is no such thing as a negative distance from one thing to another. Imagine yourself standing between two of your friends, one on your left and the other on your right. Let’s assume they both stand a meter away from you: you wouldn’t say that one is a negative meter away while the other is a positive meter away, would you? There are no negative and positive meters, just meters (and well, they are always positive, as distance in the physical sense always is).

Thus we are actually not interested in summing the cases’ distances from the mean as calculated but only in their “positive version” ignoring their signs, i.e., we want their absolute values.

True, we could proceed with our Steps 1 and 2 using only positive distances. When done, this produces an actual dispersion measure called mean deviation (or mean absolute deviation). The mean deviation is easy to understand and quite intuitive, however (and perhaps to your chagrin), it is rarely used — specifically because we have the variance and standard deviation which are found to be much more useful (this comes into play in inferential statistics, as you will see in the latter part of this book). Due to its unpopularity, I’ll therefore skip the mean deviation — we’ll have to look for another way of getting only positive numbers for our calculation of the average distance from the mean.[4]

Now stop and think: beside absolute values, is there another way of turning numbers positive?

If you thought of squaring, good for you! A (non-zero) number squared is a positive number: ![]() . Thus one other way of getting around our distances-summing-to-zero problem is to square the distances before adding them up! Nifty trick, eh?

. Thus one other way of getting around our distances-summing-to-zero problem is to square the distances before adding them up! Nifty trick, eh?

Let’s test how this works with our Example 4.4.

Example 4.4 (B) Weekly Hours Worked, Revisited

A reminder: what we are trying to get is a dispersion measure giving us an average distance of the cases to the mean; something to account for the variability of all cases, not just a few (unlike the range and IQR). To make the calculations look more orderly, I add a third column to Table 4.3 above, one with the squared distances. Thus, our mysterious Step 1B is squaring each individual distance.

Table 4.4 Step 1B Squaring Individual Distances

| 3 | (3 – 8.7) = -5.7 | (-5.7)2 = 32.5 |

| 3 | (3 – 8.7) = -5.7 | (-5.7)2 = 32.5 |

| 5 | (5 – 8.7) = -3.7 | (-3.7)2 = 13.7 |

| 7 | (7 – 8.7) = -1.7 | (-1.7)2 = 2.9 |

| 8 | (8 – 8.7) = -0.7 | (-0.7)2 = 0.5 |

| 10 | (10 – 8.7) = 1.3 | (1.3)2 = 1.7 |

| 12 | (12 – 8.7) = 3.3 | (3.3)2 = 10.9 |

| 12 | (12 – 8.7) = 3.3 | (3.3)2 = 10.9 |

| 13 | (13 – 8.7) = 4.3 | (4.3)2 = 18.5 |

| 14 | (14 – 8.7) = 5.3 | (5.3)2 = 28.1 |

We are thus ready for Step 2: summing up the (now-squared) distances from the mean:

![]() ← Sum of Squares

← Sum of Squares

As you can see above, the sum of the squared distances from the mean is called the sum of squares (sometimes indicated by SS).

Finally, to get the average distance from the mean we need Step 3: to divide the sum of squares by the total number, N:

![]() ← variance

← variance

That is, the variance of your hours worked per week is 15.21, or the average of the squared distances from the mean is 15.21. (Note that we cannot say 15.21 hours as now we are working in squared units.)

And this is it, the variance. It is denoted by a small-case Greek letter s, i.e. σ[5] and, since it’s in squared units, actually σ2 (SIG-ma-squared).[6]

- Since N=10 or more makes for quite the long equations if the values are listed (summed) one by one separately, from now on I will group values by frequencies in the calculations I do as a matter of principle. (I.e., instead of 3+3, here I have (3)2, instead of 7+7+7, I would have (7)3, etc.) Coincidentally, this is exactly what we do when working with data organized in a frequency table. ↵

- The absolute value of a positive number is the number itself; the absolute value of a negative number is the number itself but without the negative sign; the absolute value of zero is zero. Absolute value is noted with two straight vertical line. For example, the absolute values of -1 and 1 are equal to each other: |-1| = |1| = 1. ↵

- If you're still not convinced and think that maybe I selected the numbers just so that the distances to their mean add up to zero on purpose, you are welcome to try this 'trick' with any set of numbers. ↵

- For the curious souls out there (all three of them), this is what the mean deviation looks like, using the numbers from Example 4.4 (A) above. As the below-the-mean sum was -17.5 and the above-the-mean sum was 17.5, ignoring the negative signs we would get

. Since N=10, by averaging the distances we get

. Since N=10, by averaging the distances we get  (the mean absolute deviation). That it, the average distance of a case's value from the mean is 3.5, or, in terms of our example, your weekly hours (which ranged from 3 to 14) on average varied by 3.5 hours from the mean of 8.7 hours, across the ten weeks you worked as a research assistant. ↵

(the mean absolute deviation). That it, the average distance of a case's value from the mean is 3.5, or, in terms of our example, your weekly hours (which ranged from 3 to 14) on average varied by 3.5 hours from the mean of 8.7 hours, across the ten weeks you worked as a research assistant. ↵ - It is pronounced SIG-ma, just like Σ which is the capital-case Greek letter S. ↵

- An alternative notation for variance you might encounter is var(x) where x is the variable in question. ↵